As intelligence scales, pattern recognition trades robustness for sensitivity. At extreme levels, the mind becomes paralyzed by apophenia, feedback loops, and the cognitive uncertainty principle.

The concept of boundless intelligence has long captivated human imagination, from science fiction narratives of hyper-intelligent AI to philosophical speculations about post-human evolution. If human cognitive capacity has steadily increased—or at least specialized—over millennia, could there theoretically exist a mind with an IQ of one billion? A rigorous examination of cognitive science, psychometrics, and the fundamental laws of information theory suggests otherwise. Intelligence, at its core, is a sophisticated engine for pattern recognition. As this engine becomes exponentially more sensitive, it inevitably encounters a critical threshold. Beyond a certain point—perhaps around an IQ of 260—the very mechanisms that enable high intelligence begin to undermine it, leading to self-referential feedback loops, false pattern detection, and a cognitive ceiling dictated by the uncertainty principle.

Intelligence as Pattern Recognition

To understand the limits of intelligence, we must first define its operational basis. Cognitive science increasingly views the human brain’s superior capabilities—ranging from language and invention to imagination—as emergent properties of “superior pattern processing” (SPP) . The brain functions by electrochemically encoding, integrating, and manipulating perceived or mentally fabricated patterns within distributed neuronal networks.

This conceptualization aligns closely with the psychometric construct of “fluid intelligence,” which is the ability to reason, solve novel problems, and identify relationships independent of acquired knowledge . The most robust psychometric tests for general intelligence, or the g factor, such as Raven’s Progressive Matrices, are explicitly designed as pattern completion tasks. They measure how efficiently a mind can extrapolate rules from limited data.

Therefore, an increase in IQ essentially represents an increase in the speed, resolution, and complexity of pattern recognition. A hypothetical entity with an IQ of 200 does not merely know more facts than someone with an IQ of 100; rather, it can detect subtler, more abstract patterns across broader and more disparate datasets with greater efficiency.

The Trade-off: Sensitivity Versus Apophenia

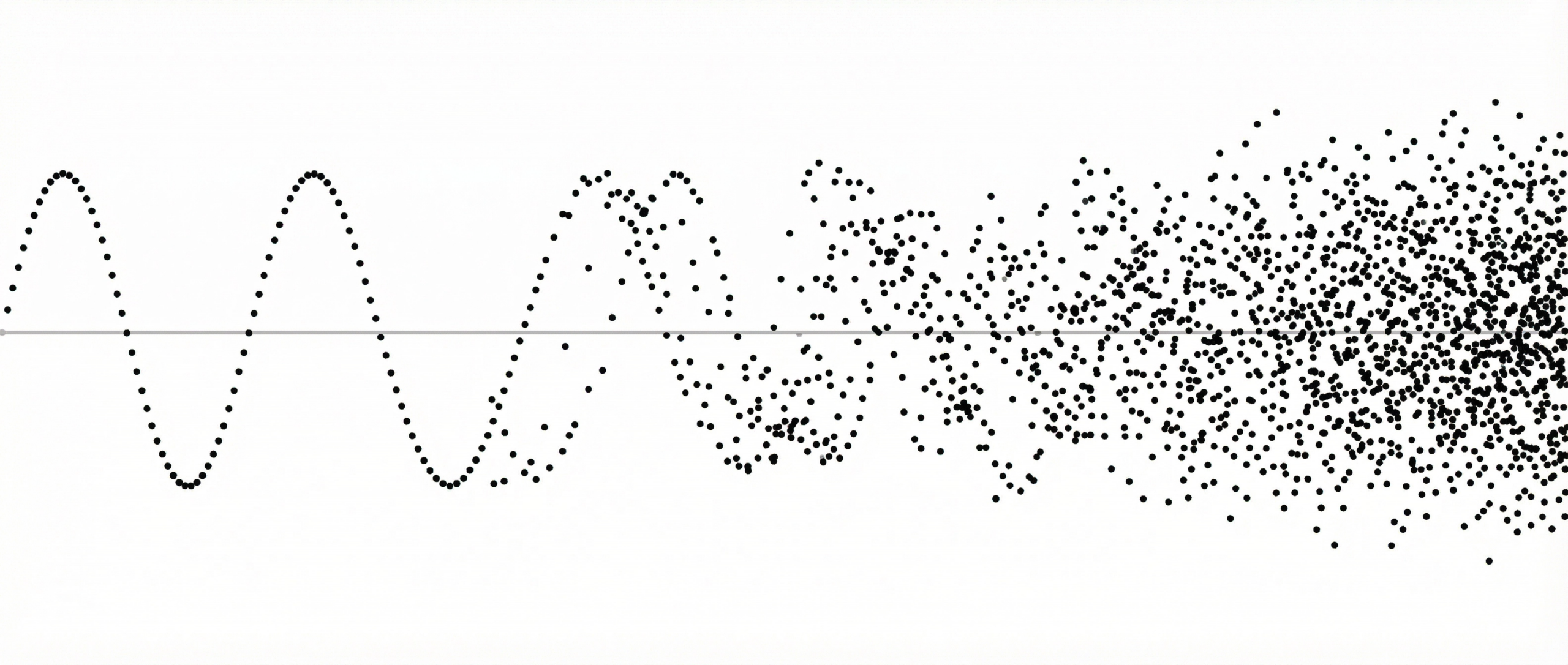

As a pattern recognition system becomes more sensitive, it faces an inescapable statistical trade-off between false negatives (failing to see a real pattern) and false positives (seeing a pattern where none exists). In human psychology, the disposition to perceive meaningful patterns in random or meaningless data is known as apophenia .

While moderate levels of pattern-seeking are highly adaptive and form the basis of creativity and innovation, hyper-sensitive pattern detection runs the risk of overfitting. In machine learning, overfitting occurs when a model learns the noise in the training data rather than the underlying signal, rendering it unable to generalize to new situations . Similarly, a hyper-intelligent mind would possess such an exquisite sensitivity to correlations that it would inevitably begin to detect “rules” in pure randomness.

At extreme levels of intelligence, the mind would be bombarded by spurious correlations. The cognitive load required to distinguish true causal relationships from coincidental noise would grow exponentially. Thus, the smarter a system becomes, the faster it can recognize patterns, but this very speed and sensitivity lead to a higher rate of false detection and the necessity for constant, rapid reversal of those detections.

The Law of Diminishing Returns in Psychometrics

This theoretical vulnerability is mirrored in empirical psychometrics by Spearman’s Law of Diminishing Returns (SLODR). Formulated by Charles Spearman, the pioneer of the g factor, SLODR posits that as general cognitive ability increases, the g factor accounts for a progressively smaller proportion of the variance in cognitive performance .

In practical terms, this means that at very high levels of intelligence, cognitive abilities become more differentiated and less cohesive. The signal-to-noise ratio of intelligence measurement degrades significantly. Psychometricians note that above an IQ of approximately 160, quantitative estimates of intelligence lose their statistical validity; the measurement encounters a ceiling effect where the signal of general intelligence is overwhelmed by the noise of specific, highly specialized cognitive traits .

| Cognitive Level | Pattern Recognition Characteristics | System Stability |

| Average (IQ 100) | Detects obvious, immediate patterns; high rate of false negatives. | Highly stable; relies on heuristics and crystallized knowledge. |

| Gifted (IQ 130-145) | Detects abstract, non-obvious patterns; balances innovation with reality testing. | Stable; optimal signal-to-noise ratio for complex problem solving. |

| Profound (IQ 160+) | Hyper-sensitive to subtle correlations; increasing risk of overfitting (apophenia). | Decreasing stability; SLODR indicates fragmentation of general intelligence. |

| Theoretical (IQ 260+) | Detects patterns in noise; rapid false positive generation and reversal. | Unstable; subject to feedback loops and chaotic oscillation. |

Feedback Loops and Cognitive Oscillation

If we extrapolate this trajectory to a hypothetical IQ of 260 or beyond, the cognitive system enters a state of profound instability. A mind operating at this level would not simply process external data; it would continuously model and analyze its own cognitive processes. This introduces self-referential feedback loops.

In dynamical systems theory, when a feedback loop becomes too tight or too sensitive, the system reaches a bifurcation point—a critical threshold where it transitions from stable equilibrium to chaotic oscillation . For a hyper-intelligent mind, a new piece of information might trigger a cascade of pattern recognition, leading to a sudden spike in apparent cognitive output (e.g., jumping from an effective IQ of 260 to 265). However, the system would almost immediately recognize the fragility or over-fitted nature of these new patterns, forcing a rapid correction or reversal back down to 255.

Instead of achieving a stable, god-like omniscience, the mind would become trapped in a high-frequency oscillation of rapid belief formation and immediate deconstruction. It would be paralyzed by its own capacity to see every possible permutation of reality, unable to settle on a single, actionable truth.

The Uncertainty Principle of Cognition

The ultimate barrier to an IQ of one billion, however, is not merely biological or structural, but fundamental to the nature of information and measurement. This barrier is analogous to the Heisenberg Uncertainty Principle in quantum mechanics, which states that certain pairs of physical properties cannot be simultaneously known to arbitrary precision .

In the realm of cognition, a similar principle applies to self-referential systems. According to Gödel’s Incompleteness Theorems, no sufficiently complex formal system can prove its own consistency . A mind attempting to fully map, understand, and optimize its own intelligence must act as both the observer and the observed.

As the system’s intelligence approaches theoretical extremes, the act of measuring or processing its own state inherently alters that state. The cognitive uncertainty principle dictates that there is a fundamental limit to how precisely a mind can optimize its own pattern recognition algorithms without introducing irreducible noise. At the critical point, the effort to increase intelligence (to reduce uncertainty about the universe) generates an equal or greater amount of internal uncertainty (noise from self-observation).

Conclusion

The dream of an entity possessing an IQ of one billion fundamentally misunderstands the nature of intelligence. Intelligence is not a linear scale of absolute knowledge, but a dynamic, delicate mechanism of pattern recognition.

As a cognitive system scales in power, it inevitably trades robustness for sensitivity. It becomes increasingly susceptible to apophenia, constrained by diminishing returns, and destabilized by self-referential feedback loops. Ultimately, the fundamental laws of information and uncertainty dictate that there is a hard cognitive ceiling. Long before reaching an IQ of one billion, a mind would hit a critical point of instability—a turbulent frontier where the brilliance of recognizing every pattern dissolves into the chaos of knowing that no pattern is absolute.